Apologize!!! Due to the limitations of free tier AWS EC2 instance it is not possible to run this large models. We can improve this model by changing the model architecture, and adding more training data. At this level I wanted to showcase my work towards implementation and deployment of Deep Learning model.

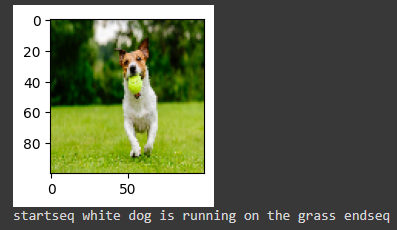

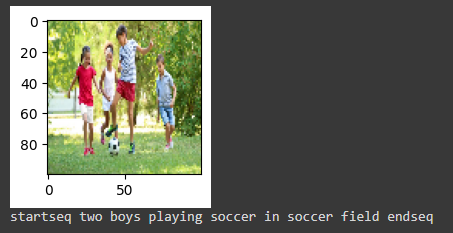

These images shows how it runs on Google Colabs with InceptionV3 model. It does a very good job.

BLUE (weight: 1.0): 0.505570

BLUE (weight: 0.5): 0.263104

BLUE (weight: 0.3): 0.182887

BLUE (weight: 0.25): 0.089559

_________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

=========================================================================================

input_11 (InputLayer) [(None, 34)] 0 []

input_10 (InputLayer) [(None, 2048)] 0 []

embedding_4 (Embedding) (None, 34, 128) 1122048 ['input_11[0][0]']

dropout_8 (Dropout) (None, 2048) 0 ['input_10[0][0]']

dropout_9 (Dropout) (None, 34, 128) 0 ['embedding_4[0][0]']

dense_12 (Dense) (None, 128) 262272 ['dropout_8[0][0]']

lstm_4 (LSTM) (None, 128) 131584 ['dropout_9[0][0]']

add_4 (Add) (None, 128) 0 ['dense_12[0][0]',

'lstm_4[0][0]']

dense_13 (Dense) (None, 128) 16512 ['add_4[0][0]']

dense_14 (Dense) (None, 8766) 1130814 ['dense_13[0][0]']

=========================================================================================

Total params: 2663230 (10.16 MB)

Trainable params: 2663230 (10.16 MB)

Non-trainable params: 0 (0.00 Byte)

_________________________________________________________________________________________